Meta revealed on Tuesday its extended efforts to enhance the safety of young users on Facebook and Instagram. The update includes new settings for teens, incorporating content restrictions and the concealment of search results linked to self-harm and suicide.

As the parent company of Facebook and Instagram, Meta emphasized that these new policies will supplement its existing arsenal of over 30 well-being and parental oversight tools designed to safeguard young users.

This announcement follows increased scrutiny on Meta's potential impact on teen users, with whistleblower Arturo Bejar, a former Facebook employee, testifying before a Senate subcommittee in November. Bejar raised concerns about the sexual harassment of teens on Instagram and accused Meta's top executives, including CEO Mark Zuckerberg, of ignoring warnings for years.

Court documents from lawsuits against Meta in November and December revealed allegations that the company knowingly allowed accounts of children under 13, collected their personal information without parental consent, and created a "breeding ground" for child predators.

The recent pressure comes approximately two years after another Facebook whistleblower, Frances Haugen, released internal documents known as the "Facebook Papers," prompting Meta and other social platforms to enhance their protections for teen users.

In a blog post outlining the new policies, Meta expressed its commitment to providing safe and age-appropriate experiences for teens on its platforms. The changes involve hiding age-inappropriate content, such as posts discussing self-harm and eating disorders, nudity, or restricted goods, from teens' feeds and stories. All teens on Facebook and Instagram will now be placed in the most restrictive content recommendation settings by default.

Meta also expanded the range of search terms related to self-harm, suicide, and eating disorders for which results will be hidden. It plans to share resources from organizations like the National Alliance on Mental Illness when users post content related to struggles with self-harm or eating disorders.

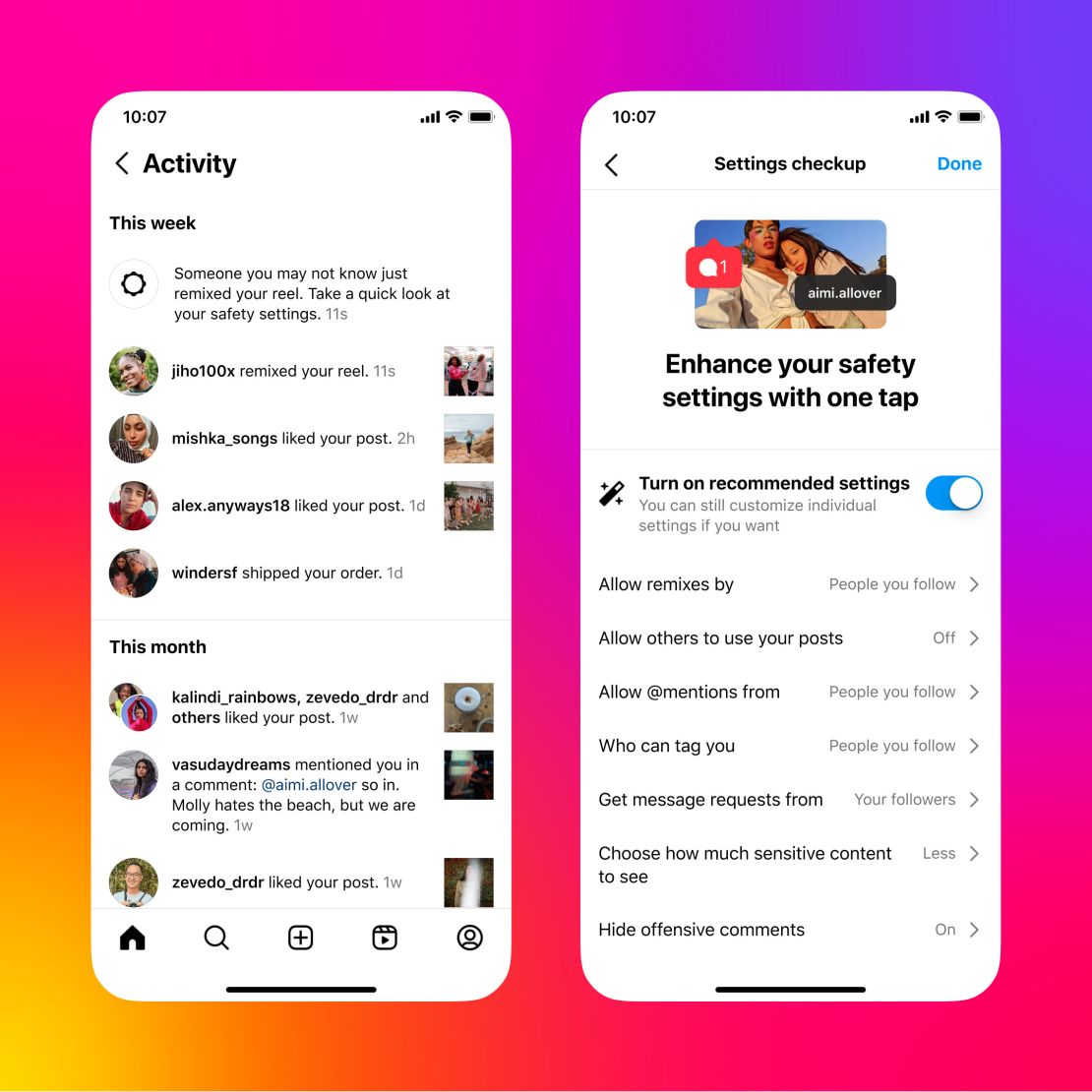

Additionally, Meta will prompt teen users to review their safety and privacy settings, offering an easy, one-tap option to activate recommended settings. These settings will automatically restrict who can repost their content, "remix" and share their reels, tag, mention, or message them, and help hide offensive comments.

These updates aim to address concerns about the ease with which adult strangers can message and proposition young users on Facebook and Instagram. They complement Meta's existing teen safety and parental control tools, including features like monitoring screen time, reminders to take breaks, and notifications for parents if their teen reports another user. The changes are slated to roll out to users under 18 in the coming months.